As part of the Image team at GREYC lab (CRNS, ENSICAEN, University of Caen), I implemented the “fill by line art” algorithm in GIMP, also known as “Smart Colorization“. You may know this algorithm in G’Mic (developed by the same team), so when they proposed me to work with them, I wanted to implement this algorithm in GIMP core. Thus it became my first assignment.

The Problem

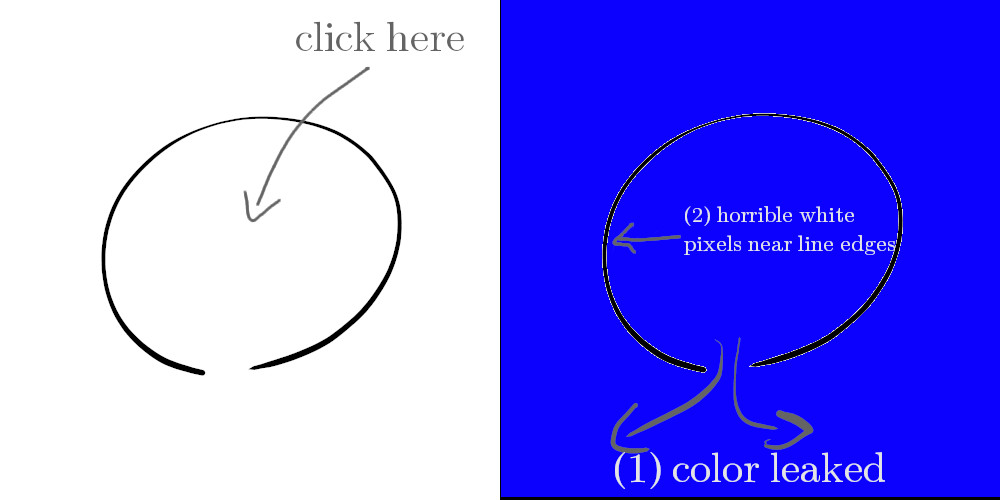

The concept of filling by line art is simple conceptually: you draw a shape with a black pen, say an approximate circle, and you want to fill the inside with a color of your choice. You could already do this, more or less, with the bucket fill, when filling by color similarities. Unfortunately it has 2 major issues:

- If ever the line art is not properly closed (“holes” in the lines), the color will leak outside. This might be a small painting mistake sometimes, yet if you don’t see the holes (it could be just 1 pixel in worst case), wasting time finding it is not funny. Othertimes it may even be an artistic choice (“rougher” line style for instance, or any of the billion reasons why you’d want non-closed lines).

- Usually you get very ugly non-colored pixels next to line borders because of interpolation, aliasing or whatever (unless you draw with very hard lines) and this is not acceptable result.

As a consequence, probably no digital colorists ever use the bucket fill directly. There are various other methods, often with the fuzzy select tool (or other selection tools), growing/shrinking the selection then bucket-filling it. Just even painting directly is sometimes the best. Attending one of Aryeom’s workshop on the matter of colorization is absolutely amazing and enlightening as she can teach you a dozen of different methods, and she herself does not always use the same method (she would say it depends on the situation). On ZeMarmot project, I also made some custom quick-colorization Python scripts which Aryeom have used for years now and which do a pretty decent job for optimizing this tedious job (though it’s still tedious!).

The algorithm

The research paper is called “A Fast and Efficient Semi-guided Algorithm for Flat Coloring Line-arts“. I have worked based on C++ code by Sébastien Fourey, with both his and David Tschumperlé’s input, being both co-authors of the paper.

For our needs, it basically has 2 main steps:

- Closing the line arts, which is done by finding “key-points” which are line edges with extreme local curvatures (we estimate them likely to be the end for unfinished lines), then closing the lines by joining the keypoints based on some “quality” criteria such as close opposite angles or maximum distances. Lines can be closed either with splines (i.e. curves) or straight segments.

- Actually colorizing the created closed regions, “eating” a bit under the line art pixels to ensure absence of uncolorized pixels near borders.

You can see that the algorithm actually deals with both issues previously raised (which I conveniently numbered in the same order ?)! Nevertheless I only implemented the first step of the algorithm and went with my own solution for the second step (still based on similar concepts though), because of usability issues.

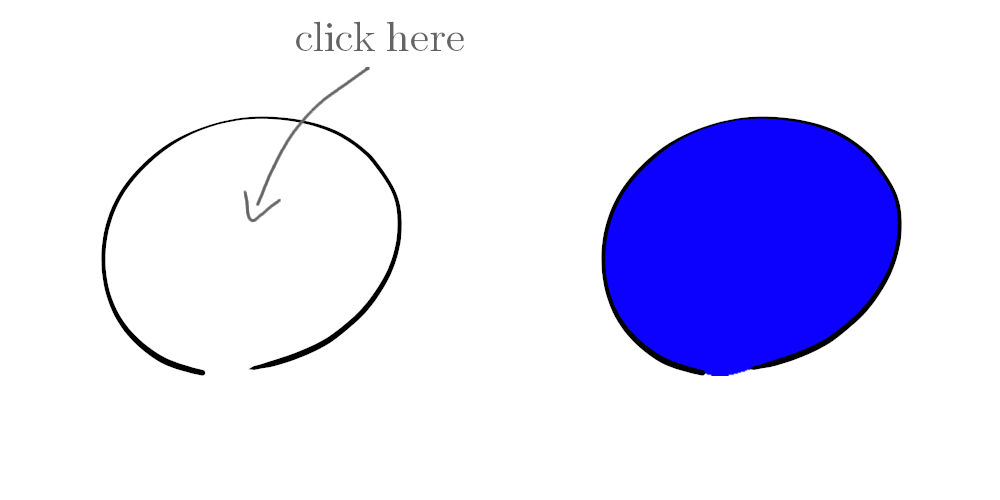

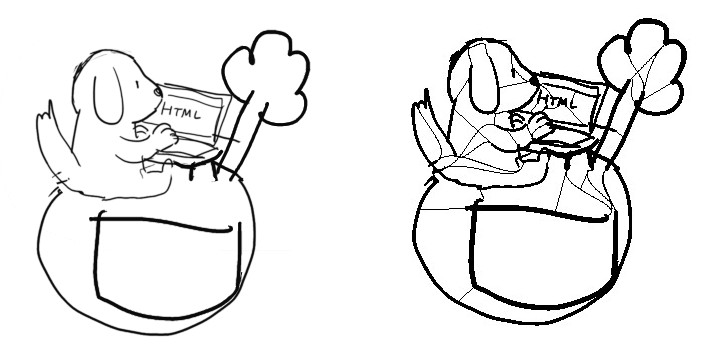

Here is what bucket-filling by line art looks like in GIMP:

I am not going to re-explain the algorithm in full. So if interested by technical details, I suggest that you just read the research paper which is quite clear with nice self-explanatory images too. If you are more “fluent” with code, such as myself, than with math equations, you may also look at my implementation in GIMP, which is mostly self-contained in gimplineart.c, and in particular start with gimp_line_art_close().

Below I will focus more on improvements we made to the algorithm, and which you won’t even find in the paper. I remind that we also worked with the animator/painter Aryeom Han (the director of ZeMarmot project) as artist advisor so that the implementation is actually useful for real work.

Note: this article is mostly about the more technical side of things. If you are just interested into how to use the tool efficiently, wait a few days or weeks. We will make a short yet (hopefully) exhaustive video showing the usage of every option.

Step 1: line art closure

To get a basic idea of what’s going on under the hood, here is an example (using an unfinished sketch by Aryeom, which are good examples for the purpose of the algorithm as sketches are full of holes!). The leftest image is the scribble as-is, the middle is how it is transformed by the algorithm (you never see this version of the image!), the rightest one is a quick attempt to flat-colorize it by myself (using the bucket fill tool only, no brush, nothing!) in less than 1 minute (no joke, I timed!).

Note: of course do not look at this result from a “finished work” point of view. Colorization work is often done in several steps, the first step being flat colors. This tool is only working on this first step and the image may still need additional tweaks (even more with this example as I ran it off a very rough sketch image).

Estimate a global local line width (improved from paper)

I very quickly noticed that a part of the algorithm seemed a bit suboptimal. As said above, we need to detect key-points based on line curvatures. This was a problem with big brushes (either because you paint very fat lines on a small image or because you just paint very high resolution artworks hence even your thin lines may be dozens of pixels). The end of such lines may have very low curvatures and go undetected. In the original paper, the proposed solution was:

For the sake of independence with respect to the image resolution, a second step allows to reduce the width of the strokes to a few pixels, if necessary, using a morphological erosion. The radius to be used for this erosion is set automatically by estimating the width of the strokes found in the drawing.

Section ‘3. Pre-Processing of the anti-aliased image‘

Unfortunately computing a single estimation of the line width for the whole drawing assumes line arts in a same drawing all have the same thickness! Just ask a calligrapher how ridiculous it sounds! 😉

As first consequence, even though it still worked many times, it could add previously non-existing holes (if eroding a line thiner than the average line width). The research paper acknowledged this issue but discarded it, assuming the closure step (which necessary follows) would anyway close the new hole again:

One should note that disconnections that may result from the morphological erosion step applied here, which remain rare, will be corrected anyway by the closing step to be applied thereafter.

Section ‘3. Pre-Processing of the anti-aliased image‘

Yet in very early tests which Aryeom did with the first version of my implementation, she quite quickly encountered cases where the closing step did not close the created holes. In even at least one instance, we even had a perfectly closed line art which leaked the color fill ⇒ we got the reverse of why the algorithm was created! Quite paradoxical! To add to the worse, while finding micro holes in not well closed lines can be hard, finding non-existing holes (because only created in an internal, non-visible, representation) is close to impossible.

And the ironical part is that the erosion did not even performed its goal that well as it was very easy to create fat lines where no key points got found despite the erosion. In the end, the global width estimation+erosion idea added more problems than it solved.

Conclusion: this was bad.

After much discussion with David Tschumperlé and Sébastien Fourey, we came up to an evolution of the algorithm, computing approximate local line width for every line art pixel (instead of one single line width for the whole drawing), simply based on a distance map of the line art. Then we made the test deciding whether an edgel curvature was keypoint material relatively to the local line width (instead of eroding and assuming a theoretical global line width).

Not only did it perform a lot better, did not create unfillable holes in perfectly closed areas, did detect better end points on very huge strokes, allowed for variable strokes in a same drawing (whether as a stylistic choice or simply because perfection is hard), but it even ended up faster!

As an example, the original version of the algorithm would have failed to detect end-points for, and close this shape with such fat lines. The updated algorithm has no such problem:

» For these interested, see the commit «

Parallelization for fast processing

Line art closure was clearly the most time-expensive step. Though it does perform quite well on FullHD or even 4K images, it can still spend half a second on my laptop. And half a second for an interactive tool is huge! And if we run this on very big images (which are not uncommon at all nowadays), it may even take several seconds.

So I parallelized this whole process in order to run it as early as possible (as soon as the Bucket Fill tool is selected). As people are not robots, this makes the interaction quite seamless and you may not even notice the processing.

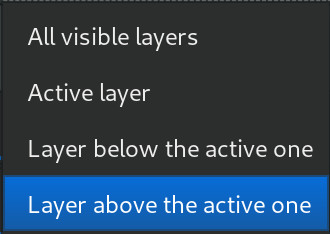

Partially for the same reason, you may notice that the “Source” option proposes you much more than all other tools (“Sample merged” versus active layer). Here you can also choose the layer above or below the active one. There was both a logical reason (you do not necessarily want to count colors as line arts) and a performance one (the software does not have to continuously recompute the closure at every edit).

Step 2: color filling

Making the algorithm interactive and error-safe

The original algorithm was based on filling all zones at once by using a watershed algorithm to fill the whole image.

I did the choice to just drop this part of the algorithm. It was mostly a usability choice. Basically the first time I saw the images demonstrating the algorithm on G’Mic, I found the result cool, but the implementation GUI seemed completely impractical. Still I am not the painter in the team, so I showed it to Aryeom. Her first words after seeing how it worked were “I will never use this” (or something along the line!). Note well that we are not talking about the end result, but about the computer-human interaction. As we said, colorizing is tedious, but if the smart colorization is even more tedious, then why use it?

So what’s tedious? There were several variant interactions in G’Mic: you can for instance let the algorithm color every zone randomly, then you could select each plain color zones for recolorization; there is also the possibility to guide the algorithm with color spots, hoping it performs as you want. I also suggest this interesting article by David Revoy, who contributed to the original version of the algorithm.

![filtre « Colorize lineart [smart coloring] »](https://img.linuxfr.org/img/68747470733a2f2f74736368756d7065726c652e75736572732e67726579632e66722f6c6672342f735f676d69635f736d6172745f636f6c6f72696e672e6a7067/s_gmic_smart_coloring.jpg)

Maybe they were interesting workflows sometimes, yet this is not always something you want to do in your drawings.

For once, it takes a lot of steps to color a single drawing. For an animation (i.e. ZeMarmot project), this is even worse, as we do it for dozens or hundreds of layers.

A second reason is that such workflows assume that the algorithm is never wrong. And we know that it is a very bold assumption! Undesired results may happen. This is not necessarily a problem; what you ask to such algorithm is to work well most of the time, as long as you can always go back to more down-to-earth methods in the rare edge cases. But if you have to undo the colorization for the whole image, change the color spots trying to guess what went wrong and try again, then using “smart” algorithm is only a waste of time.

Instead we needed a colorization process which is interactive and progressive, so that errors of the algorithms are easily bypassable by temporarily reverting to traditional techniques (just for some zones). This is why I based my interaction on the existing bucket fill tool. Colorization (by line arts) should work as it always used to: you click in a zone and see it being filled in front of your eyes… one zone at a time!

It is straightforward and very error-safe. If the algorithm doesn’t detect well the zone you are working on right now, just undo and fix this zone only.

Moreover I really wanted to not have to create a new tool if possible (there are so many already). This is basically the same use case as the Bucket Fill tool, right? It’s just a different algorithm to decide how to fill, that’s all. So it makes perfect sense to be an option for this same tool.

Therefore my solution to remplace watershedding the whole image at once is to use again a distance-transform map of the lines (both original line art and invisible closure lines). As I remind, this is a decent estimation of the local line thickness. Then when the bucket fill occurs, I flood the color a few more pixels (until the half width of the line arts), thus ensuring we don’t leave ugly non-colorized looking holes near the borders. Simple, fast and efficient.

This is somehow a local watershedding, but simpler and much faster, and also allows to add a “Max flooding” option to keep the color under control.

Using smart colorization without the “smart” part!

One very cool usage one can have of this new algorithm is to bypass the first step, i.e. not compute the line art closure! This can be very useful when you are using line style without any holes (simpler design with strong lines) so don’t need any algorithmic help for line closure. This way, you can fill colors in a single click without caring about over-segmentation or processing time.

To do so, just set the option “Maximum gap length” to 0. Here an example of a very simple design (left), filled by similar colors (middle) vs by line art detection (right), in a single click:

See this kind of “white halo” between the red color and the black lines in the middle image? Quality difference with right one is striking, right? While the historical “Fill similar colors” is absolutely non usable (by itself) for quality colorization work, the new line art detection mode is perfectly usable.

Bucket Fill enhanced for all cases!

As a bonus, I had to improve the current interaction of the Bucket Fill tool for all algorithms as well. It is mostly similar, but a bit improved. What is?

Click and hold

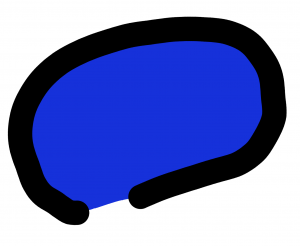

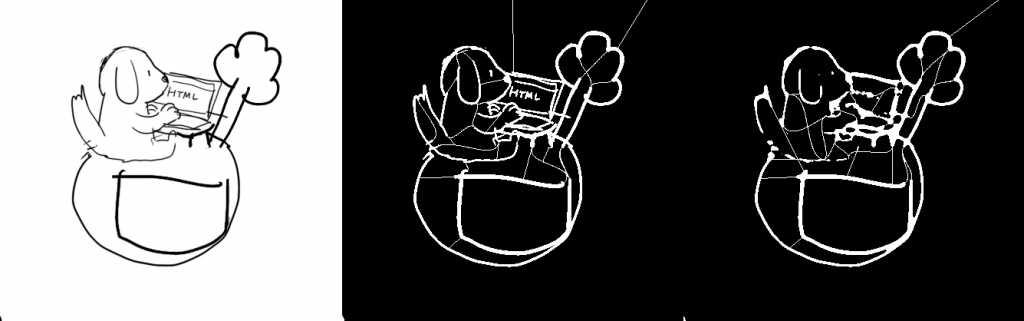

A main issue was to take care of the problem of sur-segmentation. You may call an algorithm “smart” or “intelligent”, this won’t make it “human-smart”. In particular here it creates artificial lines based on geometry, not on actual recognition of shapes or meaning, and even less by reading the painter’s thoughts! So often it will over-segment, i.e. creates more artificial zones than desired (if you paint with a crenelated brush, then it can be really bad). Let’s take again previous image as an example:

You can clearly see the issue. The dog for instance is most likely in a single color, but it ends up as about 20 zones! In such case, with former bucket fill interaction, you would then end up clicking 20 times (instead of ideally 1). Counter-productive, right? So I updated the Bucket Fill tool to work more like a brush. You can click, hold and move the cursor over various regions. This change works both with the line art algorithm and when colorizing similar colors (only for selection fill, it is meaningless). This makes filling over-segmented area much less a problem as you can still do it in a single stroke. This is not as fast as a single click, yet quite quick.

Color picking

Another nice change was to allow easy color picking with ctrl-click (no need to select the Color Picker tool). All paint tools could already do this, but not the Bucket Fill, even though it also works with colors. Being able to quickly change color (by picking nearby pixel, which is a very common usage by professional digital painters) makes the bucket fill tool very productive.

With these few changes, the Bucket Fill is now a first class citizen (even if you don’t use the smart colorization).

Limits and possible future works

Algorithm targeted at digital painters

Smart colorization works on “line arts”. This algorithm won’t perform well on random “non line art” images, and in particular is not made to work on photography. Though I’m sure some people may find interesting ways to use it elsewhere, as far as I can see, this is designed for digital painters only.

More than black line on white background use case?

Lines are detected the most basic possible way, with a threshold either on the alpha channel (if you work with transparent layers) or on the grayscale value (particularly useful when working on white paper scans for instance).

Therefore current version of the algorithm may have difficulties detecting the line arts, for instance if you scan a drawing done on not completely white paper. Or say you simply want to draw with light lines on dark backgrounds (everyone is free!). Not sure how often this occurs, and whether we should really pile up the tool with color options. We’ll see.

More optimization

Though I said it is very usable on many images of reasonable size, I consider this new algorithm still a bit slow when working on very big images (which is not so uncommon in the graphics printing industry where you often need higher resolutions than screen industry), even despite all the multi-thread code. So I would not be surprised than in some cases, you may come back to use your old-style colorization techniques.

I am hoping to be able to soon come back on my code and optimize it more.

Not so nice color borders

Color on borders does not have the nice “texture” you have when colorizing with brushes. For instance let’s look closer at our original example here.

The part where the border of the color shows will likely need edit. I added an “antialiasing” option, but this is clearly not the real solution in most cases either, and manual edit with a brush after color filling may be necessary.

Worse case is when you want to remove the lines afterwards. Aryeom does sometimes such drawing style where the lines are only for the first steps, then removed for the final render (actually a whole dreamy scene of ZeMarmot is done like this), then you’d want perfect control of the colorization border quality. This is one such example where the end painting is made only of colors, no border lines:

No API yet

We are still missing API functions to be able to run line art detection in scripts. This is on purpose, as I am waiting a bit more to make sure I don’t miss any important usage, since an API has to be stable (unlike the GUI which can be updated more easily since our new release politics).

Actually you may have noticed that even in the graphical interface, I haven’t made visible all the options you can find in G’Mic (even though they all are implemented). This is because I am trying to not make this tool over-confusing as many of these options are based on the intimate understanding of the algorithm. So that none has to play constantly with sliders at random and without understanding, I am testing the best way to not over-expose options.

Fuzzy selection tool

This algorithm is currently only implemented for the bucket fill tool, but it would be perfectly suited as an alternative method in the Fuzzy Select tool as well!

Improve segmentation issue

With very clean lines and simple shapes, the algorithm is very neat. But as soon as you draw complicated drawing, and in particular use very crenellated brushes (for instance the default “Acrylic” series of GIMP brushes), it starts to detect a bit too many false-positive end points, hence over-segmenting. We encountered such issues so I tried various improvements, but so far none is working on all cases. One such improvement was to apply a median blur on the line arts before the detection of end points, as it would definitely smooth dents in the lines. And it did work quite nicely on one such example:

Right: still segmented, yet much better, when median-blurring first

Unfortunately it works very badly on very thin lines as it would create holes (a problem we got rid of when we replaced the erosion step with local line widths!).

Right: bad result as color would leak and we lost many details

So I’m still working on this. Ideally that’s an issue for which we can find a solution, though it is not an easy topic. We’ll see!

As a general rule, over-segmenting is a problem (false positives), but it is better than failing to close holes for such tool (false negatives), especially thanks to the new click-and-hold interaction. I did improve already several issues on this matter (for instance micro-zones which the paper calls “not significant regions” yet which are very significant for digital painters as they are annoying to fill; and recently an issue with the chosen algorithm to approximate very quickly region areas, and which may be wrong on open areas).

Conclusion

I will conclude that this project is already a very interesting one to confront research algorithms to reality with real-life day-to-day work. This was even more interesting as we even confronted 3 worlds: research (an algorithm thought by brilliant minds at CNRS/ENSICAEN), engineering (myself) and artists (Aryeom/ZeMarmot). By the way, many of my GUI improvements were ideas and propositions from Aryeom who tested our work on real projects on the field. This joint CNRS/ZeMarmot cooperation went so well that Aryeom was invited to present her work and all her drawing/animation problematics in a seminar at ENSICAEN university.

Now it is still a work-in-progress in my opinion. As you could see, several aspects deserve to be improved. It is not on my main track anymore, but I will definitely improve it as I will see fit. Yet it is already definitely in a releasable state, and I do hope that many people will use and appreciate it. Tell us what you think if you try!

A last comment is that ideas behind the best algorithms are not necessarily the most incredible technically. This Smart Colorization algorithm is based on many simple transformations yet it performs well, is quite fast (within some limits; as I said above), does not take all your memory nor make GIMP hang for 10 minutes… To me, this is much more impressive than maybe much more brilliant algorithms, yet unusuable on everyone’s desktop. This is what you need in a graphics desktop software when you actually want some work done. And that’s very cool. 🙂

Have fun everyone!